Deep Dive: Understanding CUDA, TensorRT, and Deep Live Cam Architecture

Deep Dive: Understanding CUDA, TensorRT, and Deep Live Cam Architecture

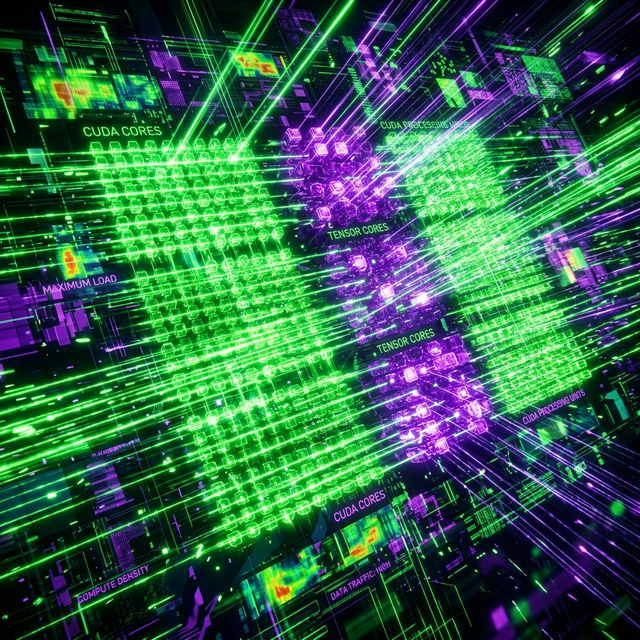

For the power users, simply clicking "Start" on the Deep Live Cam GUI is not enough. If you want to push the software to 4K resolutions or run multiple face swaps concurrently on a single stream, you must understand the underlying engine. At the heart of this real-time miracle are two profound technologies developed by Nvidia: CUDA and TensorRT.

The Calculation Chasm: CPU vs GPU

A central processing unit (CPU) is like a brilliant professor. It can solve incredibly complex problems sequentially, but it only has a few "brains" (cores). An AI model like `inswapper_128.onnx` does not require complex logic; it requires millions of incredibly simple math equations (matrix multiplications) to happen simultaneously to determine skin tone gradients and pixel placement.

A graphics card (GPU) using CUDA translates to having thousands of less intelligent, but incredibly fast, students solving the same math problem at the exact same time. This parallel computing architecture is mandatory for analyzing video feeds frame-by-frame.

TensorRT: The Elite Compiler

While CUDA provides the raw muscle, TensorRT provides the aerodynamics. When you load Deep Live Cam, the underlying ONNX (Open Neural Network Exchange) model is a generic blueprint. It is designed to work across multiple platforms. TensorRT is NVIDIA's dedicated deep learning compiler. It takes that generic blueprint and surgically optimizes it specifically for the exact silicon architecture of the graphics card currently plugged into your motherboard.

- It fuses network layers together to reduce memory bandwidth.

- It drops mathematical precision (from FLOAT32 to FP16 or INT8) where superhuman accuracy isn't needed, instantly doubling processing speed with zero visual degradation.

By forcing your Deep Live Cam launcher arguments to prioritize `tensorrt` over generic `cuda`, the software stops deciphering the blueprint and runs pure, unadulterated machine code tailored to your specific GPU. This is how you conquer latency and dominate the virtual broadcasting space.