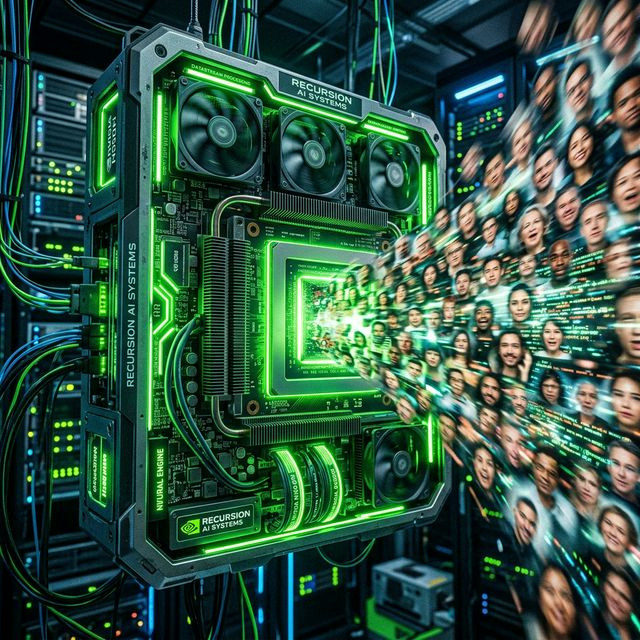

GPU Requirements for Seamless AI Deepfake Video Rendering

GPU Requirements for Seamless AI Deepfake Video Rendering

Every single day, thousands of frustrated users download AI face swapping repositories, smash the execution button, and watch their computer instantly freeze. Attempting to force geometric face mapping sequences through an integrated Intel graphical chip is like trying to drive a semi-truck with a lawnmower engine. Proper hardware is not a suggestion; it is a strict mathematical requirement.

The VRAM Hierarchy (Video Random Access Memory)

Normal PC gaming relies heavily on GPU clock speeds to draw polygons. Generative AI, however, cares almost exclusively about VRAM capacity. The ONNX models, the facial alignment detectors (like RetinaFace), and the upscaling GANs must simultaneously load into the GPU's memory pool to process video frames synchronously.

- Below 6GB VRAM: Expect regular crashes. You will be forced to use CPU execution, meaning your stream will operate at a slide-show 2 FPS.

- 8GB - 12GB VRAM (The RTX 3060 / 4060 Ti): This is the baseline entry point for Deep Live Cam. You can comfortably render 720p outputs at 30fps utilizing CUDA acceleration.

- 16GB - 24GB VRAM (RTX 4080 / 4090): The professional tier. You can activate GFPGAN enhancers, broadcast in 1080p60, and simultaneously run complex encodings through OBS using NVENC without a single dropped frame.

Nvidia vs. AMD in the AI Domain

While AMD makes fantastic gaming graphics cards, their software ecosystem for AI (ROCm) lags behind Nvidia's CUDA framework. Most open-source deepfake libraries are written natively for TensorRT and CUDA. Buying an Nvidia card guarantees plug-and-play compatibility, whereas an AMD card often requires complex workarounds and specific DirectML builds, leading to sub-optimal frame pacing.