The Evolution of Face Swapping: From Multi-Million Dollar CGI to Real-Time AI

The Evolution of Face Swapping: From Multi-Million Dollar CGI to Real-Time AI

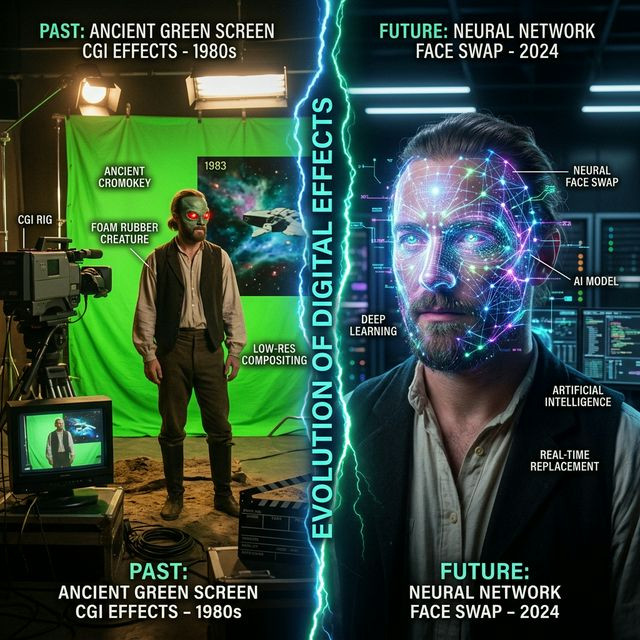

Decades ago, attempting to alter a human face on film required an astronomical budget. Hollywood studios used physical silicone masks, complex green screen rigging, and armies of 3D animators painstakingly placing motion-capture dots on actors' faces. A single scene could cost millions of dollars and take months to render. Today, an open-source tool like Deep Live Cam achieves arguably better, more terrifyingly consistent results in real-time on a consumer laptop.

The Generative Adversarial Revolution

The paradigm shift occurred with the invention of Generative Adversarial Networks (GANs) in 2014. Instead of manually painting pixels, computer scientists created two opposing AI models. The "Generator" tried to paint a fake face, while the "Discriminator" tried to spot the fake. They fought each other millions of times until the generated face became mathematically indistinguishable from reality.

The Open-Source Explosion

When this research leaked into the open-source community around 2017 (famously birthed on massive online forums), developers rapidly condensed the academic code into practical applications. DeepFaceLab dominated the offline rendering era. Deep Live Cam represents the pinnacle of the *second* phase: instant, zero-shot inference. What once required a Hollywood studio can now be executed by a 15-year-old in their bedroom, permanently democratizing visual effects.