The Math Behind the Mask: Understanding Facial Landmark Detection

The Math Behind the Mask: Understanding Facial Landmark Detection

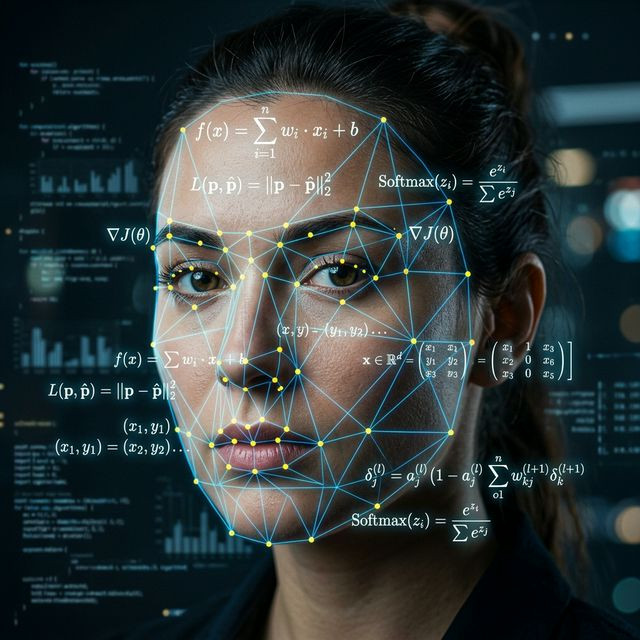

Before an AI can swap a face, it must first prove mathematically that a face exists in the frame. Deep Live Cam does not "see" eyes or a nose; it sees pixel arrays, hex values, and geometric spatial data. The backbone of this recognition process is known as Facial Landmark Detection.

The 68-Point Coordinate System

Most advanced models (like those utilizing Dlib or RetinaFace algorithms) map the human face onto a rigid 2D grid utilizing 68 specific anchor points. These points are universally distributed: Points 1-17 trace the jawline, 37-42 define the right eye, 49-68 map the complex inner and outer contours of the lips.

When you speak or smile, these 68 points violently drift across the X and Y axes. The software rapidly calculates the delta (change) in distance between these points several dozen times a second. If the distance between the upper and lower lip coordinates increases, the system determines the mouth is opening.

Matrix Affine Transformations

Once the landmarks are identified, the system must align the target face (your chosen image) to the source face (your webcam). Using severe Affine Matrix Transformations, it warps, stretches, and skews the 2D image until its 68 points perfectly overlap the geographical coordinates of your real face. This ruthless underlying geometry is what separates flawless, natural tracking from amateur, jittery filters.